Edmail: a peek behind the deliberative curtain

February 24, 2026

On April 7th, 2024, I submitted a public records request to the City of Edmonds, asking for every email sent and received by Edmonds councilmembers in the previous four months (January 1st - April 1st, 2024.)

“Please provide all emails sent and received by all active Edmonds City Councilmembers during the period beginning January 1st, 2024 and ending April 1st, 2024. Please provide email records in a native format (i.e., .mbox or .eml – not PDF or other flat format.)This made it much easier to programmatically process emails. Happy to make an on-site visit to obtain digital files if the compiled files have a prohibitive upload size.”

Inspired by research using other civic corpuses, I was originally interested in mapping the names of people writing to city councilmembers to voter registration records, so that I could explore the age representativeness of community input over email during this period. A list of sender names (extracted from message metadata) and emails would have sufficed.

Then it occurred to me: if it’s all public data anyways, why not shoot for the moon? I wasn’t sure what would happen, but I wanted to find out.

Getting the emails #

Shortly after I submitted the request, the City’s (fantastic) public records officer reached out to confirm a few things. Once we were on the same page that yes, I really wanted everything, batches of emails started to appear in the public records request portal.

Emails uploaded

by month · 12,232 total

In March 2025 – nearly 11 months into the Great Email Slog – I got a shoutout in mayor Mike Rosen’s 2025 State of the City address:N.B. Mike is a very public-minded guy (rewind the video about a minute if you don’t believe me); don’t mistake his tone here for any sort of distate for disclosure of public records.

On June 25th, 2025 – more than a year after submitting it – my request was complete. 3.9 GB of .msg files covering 8,293 unique emails now languished in my Downloads folder.

Doing something with them #

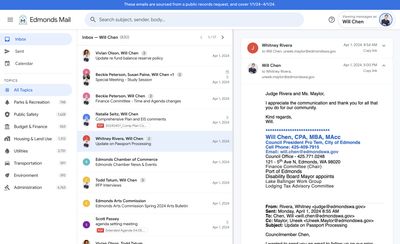

Inspired by work of the good people at Jmail – whose work is far more important and technically impressive – I built out a webmail-style interface to visualize messages and calendar events included in the dataset over the course of about two weeks. I used Claude Code to do 90% of the development work; building this cost about $70 in Claude API usage (70% Opus, 30% Sonnet), $50 of which was a one-time promo credit to celebrate the release of Opus 4.6.

Here’s what Edmail looks like (and here’s a link):

I am happy to provide the full email dataset to anyone who would like to explore it for public-spirited purposes: just send me an email. (The City’s public requests portal is very slow, but you can also download it there.)

Takeaways #

A peek behind the deliberative curtain #

This is not a particularly useful dataset. Edmail is not a particularly useful tool. Three months of emails from just seven councilmembers is a pretty limiting sample.

However, they offer a fascinating window into the information diet and internal dialogues of elected representatives en masse: from the mundane (scheduling back-and-forths, promotional newsletters, triaging community complaints, TPS reports, etc.) to the exotic (diatribes from wonks and cranks.)

Spend 5 minutes scrolling through Edmail, and you’ll see what I mean: at its best, it captures the delicate (and previously invisible) “deliberative fabric” of governance that sits underneath decison outputs.

As someone followed the outputs (resolutions, legislation, etc.) of the Edmonds City Council over this period, exploring some of its inputs (or at least those captured in email) reminded me of Conway’s law:

[O]rganizations which design systems (in the broad sense used here) are constrained to produce designs which are copies of the communication structures of these organizations.

— Melvin E. Conway, How Do Committees Invent?

Codegen tools and the public interest #

LLM code generation tools like Claude Code are now capable enough to rapidly accelerate – and cheapen – the process of analyzing and visualizing public data, which is particularly important given the typically finite resources available to public-interest journalists and advocates.While local governments typically garner more trust among their electorates than state and federal ones, they still have significant room for improvement. Most polling doesn’t distinguish between “government” (i.e. unelected administrators) and “elected representatives”; I’d be curious to see how trust differs between those groups.

Though population sizes and technologies for collecting and disseminating information have dramatically changed over the last 100 years, political institutions in the US rely on largely the same deliberative processes and decision mechanisms: electing a subset representatives to make decisions (usually via majority vote, in meetings after 6pm on weeknights) on behalf of a larger public.

I think we will soon live in a world with a lot more interfaces for exploring the decision-making that is done on behalf of electorates by their representatives. I am curious how these interfaces will affect public appetite for structural changes to deliberative processes.

Traceability: building on transparency #

Working on Edmail got me thinking about “transparency”: a big buzzword in local politics. Unfortunately, the word itself is a little… unclear. (Ha.) Usually, it means some version of “society is better off when we have easy access to candid information on how public decisions are made, and public money is spent.” True!

Policies that predispose public agences towards openly publishing their operational data products are great (although I expect something like a civic uncertainty principle – perhaps formulated as, “the more intensely a public system is observed, the more its behavior changes in response to that observation” – applies here.)

But in my experience, the loudest voices calling for “transparency” in government are often really hoping for something more specific: “traceability”, or the ability to construct, and follow, the chain of decisions over time that contribute to outcomes of interest — the big, noisy failures like fiscal emergencies, service delivery catastrophes, and other undesirable outcomes that continue to afflict public entities of all sizes and functions today.

Transparency is a precondition for traceability: you need public information to build a causal chain about public decision-making, but accountability ultimately depends on the quality of the chain you are able to build. I think the notion of “traceability” offers a more testable standard for assessing the extent to which public systems are “transparent.”

The 2010s were a landmark decade for transparency, perhaps exemplified by the proliferation of “open data” portals allowing public access to internal datasets, in no small part because of technological improvements (cloud computing, broadband internet connections…) that made it possible for governments and the public to conceive of sharing and processing this data in a practical manner.

It seems like the 2020s could be a landmark decade for “traceability” along a similar technological dimension: harnessing cheapening software to relate and interconnect large volumes of public data to improve the interpretability of public entities. Emerging projects like CivLab in San Francisco and citymeetings.nyc in New York City also highlight this potential. Imagine a USAFacts for every public corporation in the United States.

I don’t think these tools alone are capable of transforming how people relate to their local governments, but I think they have an important role to play in making people more confident in the public systems that serve them. Long-term, I hope that they crack the door open a little wider to dream about political institutions that can accurately capture the plurality of preferences held by the people they serve, and synthesize that information into public policies that best serve their collective interests.